|

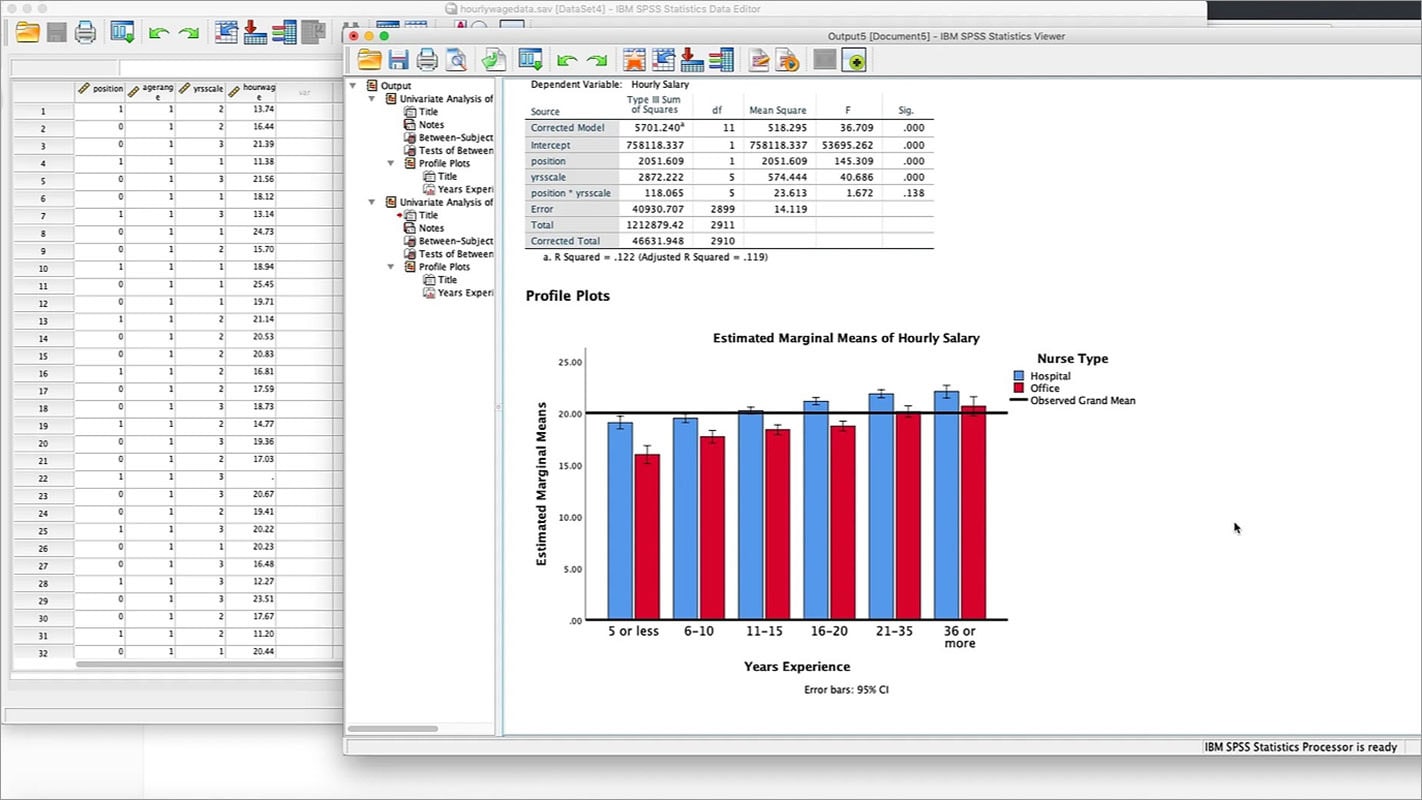

Testing Normality Using SPSS. Just because you can put a dataset in and get values out - even values that look significant, it doesn’t mean they are - did all of the assumptions and requirements of the methods/tests you used hold true/pass with your data?4. Similar to figure 2 the data point (x, y) denotes that y of the time the change of utilization is over x.SPSS is a classic example - and the social sciences have had a series of discredited papers over recent years due to poor application of statistical methods.

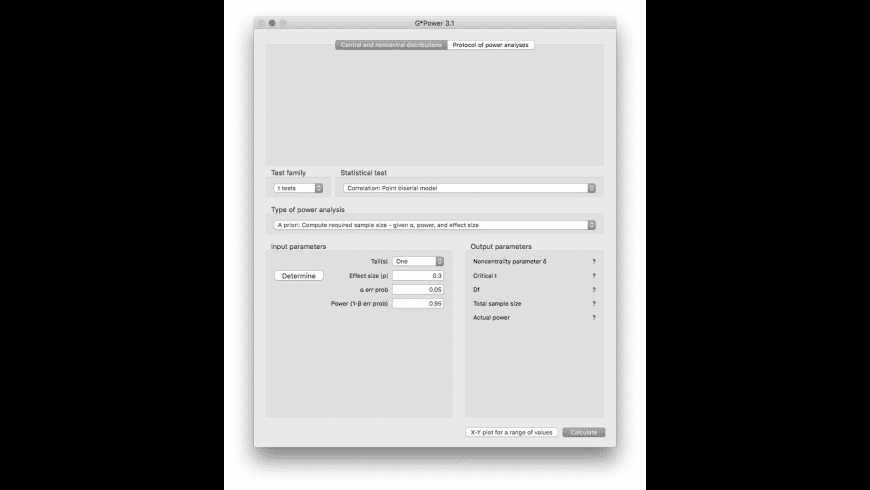

Software For Statistical Analyses Of Test Items How To Correctly UseWould we want civil engineers using tools like this to build bridges? Or people designing nuclear reactors?To me, this is worse, not better unless it somehow helps you actually understand when, why and how to correctly use these tools.This is a well known problem in cognitive systems engineering, which sometimes seem to promote design which might seem backwards to an interaction designer focused on creating for everyday consumption.You don't want to design the control room for a nuclear reactor like you design a webpage, because you can under no circumstances sacrifice correctness for ease. That could be lives in the balance. Especially when you start talking about applications in domains like medicine. Free and Open source Statistical Analysis software is capable of integrating, analyzing, and interpreting a massive amount of data in a statistical framework.So, while I haven’t looked closely at this tool, all I saw was talk of the interface and the ease of getting results even if “you don’t know where to start”.But a tool that makes it simple to get an answer is not the same as one that makes it simple to get a correct and valid answer.Science is a means to an end in many cases. Only 39% of studies were found to be replicable and much of the blame falls to poor methods and techniques like p-hacking.Yes, we should - but dumbing down interfaces in ways that don’t actually provide good guardrails/handholding to help with proper application is not making good science easier.> Not creating more complex tools for no reason.Nowhere have I or others suggested that. It’s knowing what tools to use and using them properly so that you get valid answers.If you haven’t been paying attention, the social sciences have been plagued by an epidemic of failures of reproducibility - see. Science isn’t running stats tools. In fact, any domain you don't understand well enough well tempt you to design for ease over correctness.Ideally, the designer should understand the domain at hand well enough to create a design that makes it easy to be correct, and hard to make an error.There's a lot of stuff out there that has been neither designed for ease nor correctness, however, but which has a design arbitrarily dictated by the table layout of a database, or some other random technical constrain that has nothing to do with the problem domain.>Like it or not, SPSS made science much easier.No - it didn’t. The potential gain in productivity when designing for ease is vastly overshadowed by the risks associated with making an error.When we're not talking about nuclear reactors, but—for example—statistics, where consequences are abstract, it might get tempting to err on the side of ease.

I am no physicist yet I can certainly apply the Clausius Clapeyron equation as needed. It is an empirical science in its own right.Of course, it doesn't always take a statistician to do the necessary statistical work. Well, ladies and gentlemen, statistics is more than that. Politicians, administrators, and even some other scientists see statistics as merely a badge to be placed atop their own work for validation. To market a product as a way to jettison the statistician is so shortsighted as to be intellectual malpractice.Forgive me if I seem overly aggressive but I have grown weary of my and my colleagues' profession being side-lined and belittled. Shoddy research methods could do anything from giving false hope to actually endangering lives.Robust statistical analyses require knowledge, judgement, and increasingly, specialist expertise.

Which is to say, since its not a logical impossibility and not a well defined concept and our technology/understanding is increasing, odds are we'll at least make headways towards it to the point that its already pretty good. I understand that by answering in the negative (no, its not possible), i would put myself into the "64k is enough for anybody" type comments. No where did i see any application of train/test, sample/resample type methods to try to control for over-fitting in the prediction application or truly estimate how predictive/replicable such a technique would be in the real world.While I appreciate the work done required to put something like this together (a lot of it looks like a gui interface to my own exploratory functions/scripts in R, for example), i genuinely believe this approach is more dangerous/likely to lead to false conclusions than helpful.Hmmm.

0 Comments

Leave a Reply. |

Details

AuthorFred ArchivesCategories |

RSS Feed

RSS Feed